Explaining Hyperparameter Optimization via Partial Dependence Plots

- authored by

- Julia Moosbauer, Julia Herbinger, Giuseppe Casalicchio, Marius Lindauer, Bernd Bischl

- Abstract

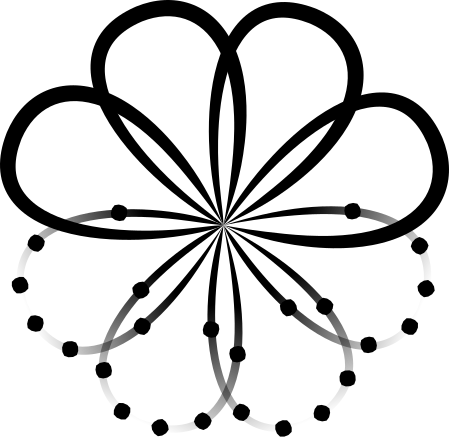

Automated hyperparameter optimization (HPO) can support practitioners to obtain peak performance in machine learning models. However, there is often a lack of valuable insights into the effects of different hyperparameters on the final model performance. This lack of explainability makes it difficult to trust and understand the automated HPO process and its results. We suggest using interpretable machine learning (IML) to gain insights from the experimental data obtained during HPO with Bayesian optimization (BO). BO tends to focus on promising regions with potential high-performance configurations and thus induces a sampling bias. Hence, many IML techniques, such as the partial dependence plot (PDP), carry the risk of generating biased interpretations. By leveraging the posterior uncertainty of the BO surrogate model, we introduce a variant of the PDP with estimated confidence bands. We propose to partition the hyperparameter space to obtain more confident and reliable PDPs in relevant sub-regions. In an experimental study, we provide quantitative evidence for the increased quality of the PDPs within sub-regions.

- Organisation(s)

-

Machine Learning Section

Institute of Information Processing

- External Organisation(s)

-

Ludwig-Maximilians-Universität München (LMU)

- Type

- Conference contribution

- No. of pages

- 21

- Publication date

- 08.11.2021

- Publication status

- E-pub ahead of print

- Peer reviewed

- Yes

- Electronic version(s)

-

https://arxiv.org/abs/2111.04820 (Access:

Open)

https://proceedings.neurips.cc/paper/2021/file/12ced2db6f0193dda91ba86224ea1cd8-Paper.pdf (Access: Open)